Psychoacoustics is the scientific study of human perception of sound frequencies and their corresponding psychological responses. This includes sound frequencies capable of traveling through the ears like music, speech, and others.

Music is the soul of mental agility. It plays its tunes on people’s moods and powerfully transforms their personalities. The right music stirs up your emotions and enhances your situation experience. Therefore, this firmly establishes the connection between music and your mind. In that way, a music producer plays a pivotal role in our lives.

To excel in music production, you have to know how your music will impact your listener’s minds. This will help you create better music and render a unique listening experience for your listener. For this purpose, you must acquire basic knowledge of psychoacoustics. So let’s walk through this article to fulfill your pangs of curiosity!

Understanding Psychoacoustics

Psychoacoustics primarily deals with two areas namely:

Perception

Perception tells us about the various sensory systems and their function in perceiving sound waves.

Cognition

This area shows how our brain processes the perceived sound.

Perception

Sound waves reach our ears as mechanical waves. However, our inner ear converts these waves to neural stimuli for our brain’s reception which results in the perception of sound. Therefore, while processing the audio signals, music producers have to consider the environment, and the role of our ears, and the brain. Now, let us discover how perception influences music production.

Limits of Perception

Although our bodily mechanizations perceive sound, they limit above or below which perception ceases. This range is called the audible range or the limits of perception. The human hearing range depends on:

- The pitch of the sound (high or low) – measured in terms of Hertz(Hz)

- The loudness of sound – measured in terms of decibels (dB)

Therefore, humans can hear sound frequencies ranging between 20 Hz to 20,000 Hz. However, frequencies outside this range require a larger sound pressure to reach audibility.

Frequencies below 20 Hz: Frequencies below 20 Hz are known as ‘infrasounds.’ We don’t perceive these sounds as a unified tone. However, it resembles a series of pulses.

Frequencies above 20KHz: These frequencies disappear entirely; hence these sounds are generally harder to hear. In addition, 20KHz is the limit for individuals who are pretty sensitive to listening. Hence, the upper limit for individuals less sensitive may be around.

Sound Localization

Our ability to locate the source of a sound is called sound localization. Our brain computes the discrepancies in tone, pitch, and timing of sound waves reception between both ears to deduce the source location. Localization is expressed in terms of a three-dimensional position as:

- Horizontal angle

- Vertical angle

- Distance for static sounds

- Velocity for moving sounds

Human beings can detect sound in the horizontal direction compared to vertical orientations. This is due to symmetrically placed ears.

Videos

The Beautiful Lies of Sound Design | Tasos Fratzolas | TEDxAthens

Equal Loudness Contours

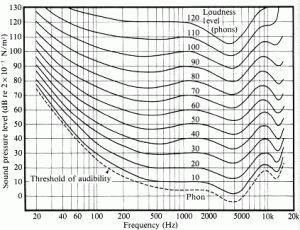

Our brain doesn’t process the perceived sound frequencies uniformly between 20 Hz to 20,000 Hz. This is because the sound waves are received through an organ called the cochlea in the ear. Hair cells populate the cochlea and are distributed unevenly. They also appear in clusters facilitating us to interpret the most common sound from our environment. These hair cells play a vital role in sending electrical signals to our brains for sound interpretation. The concentration of these signals in the hair cells creates sound pressure.

Depending on the sound pressure and sound waves’ frequency, there occurs a variation in the loudness of the perceived sound. Researchers created a graph to explain how sound pressure and frequency of sound waves influence our perception to understand this variation. These are called the equal loudness contours or Fletcher-Munson curves. Understanding this curve helps you balance the frequencies and render the most pleasing piece to your listeners.

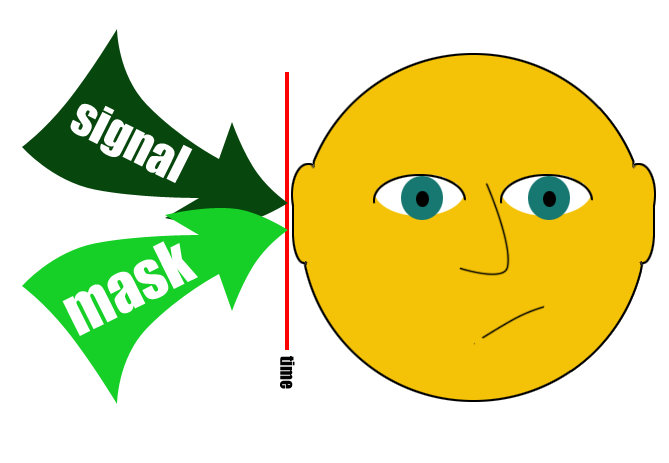

Audio Masking

A single sound source can have a tremendous impact on your perception system. However, when you try to hear multiple sounds simultaneously, you will be unable to differentiate clearly between each of them. This is because they reach the cochlea simultaneously, exciting similar regions of your hair cells. In addition, there must be a minimum frequency difference between the sounds for the auditory nerves to process them individually. This phenomenon is termed masking. To combat masking, you need to equalize your track well. For this purpose, you have to eliminate frequencies that do not count as sounds to your mix and emphasize those that matter.

Cognition

Music cognition refers to the ability of the brain to decode music. To produce better music, pay attention to the type of equipment your listeners use. The type of equipment used influences how your listeners perceive your music.

Skeuomorphism

One of the most critical applications of psychoacoustics is the concept of skeuomorphism. It is a design concept adapted primarily to UI and web design, wherein real-world sounds are mimicked digitally. So, for example, when you click to move to the next page of your ebook, you will hear the skeuomorphic sound replicating the page-flipping sound.

Final Thoughts

Thus, as a music producer, your goal will be to create better music. First, you need to understand the science of sound and produce psychoacoustic music. This will help you recognize human emotions and their corresponding reactions, which you can apply to create a memorable listening experience for your audience.